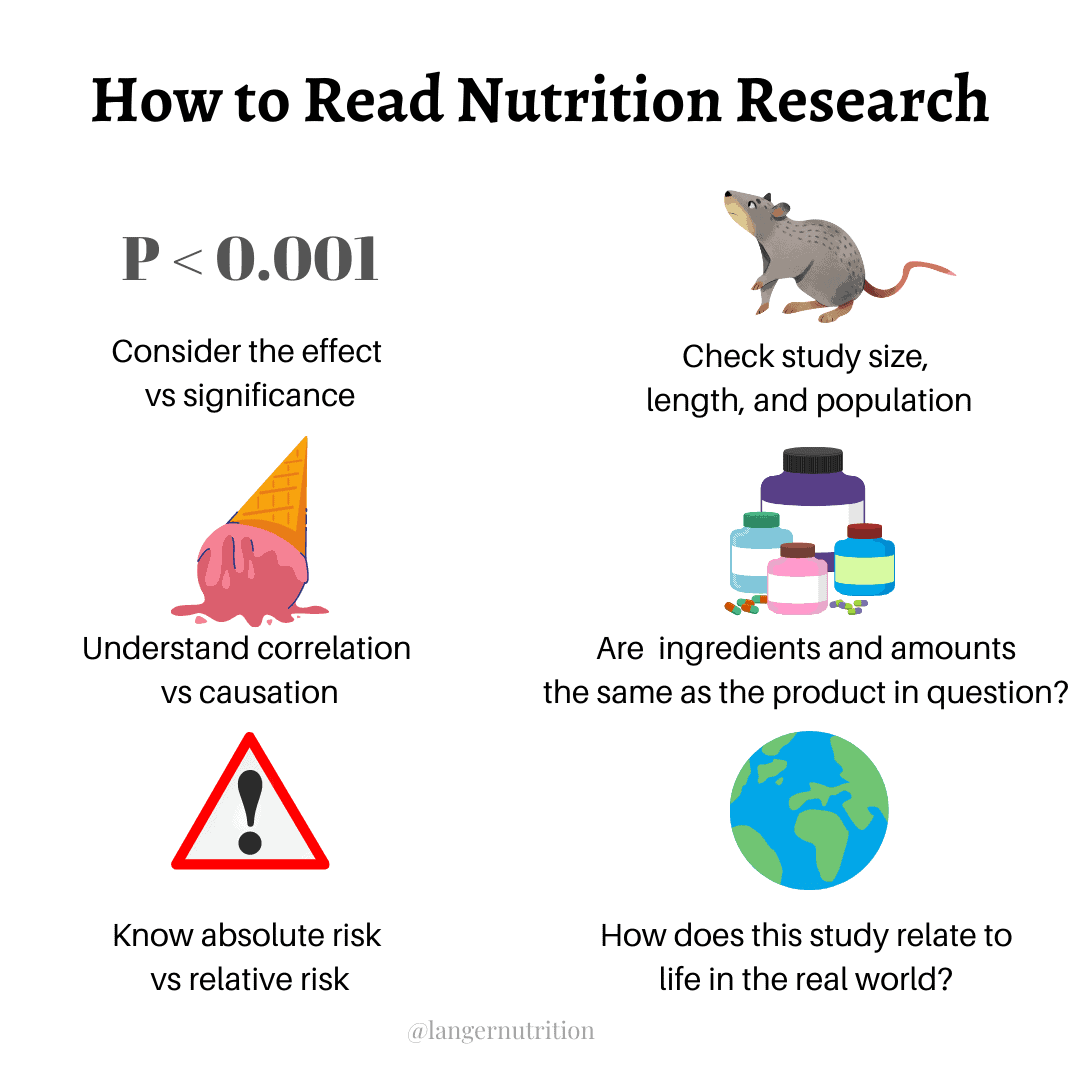

How to Read Nutrition Research: A Primer on The Basics

I recently got into a kerfuffle with two nutritionists about their post claiming that ADHD and depression are closely linked to sugar intake. These people were recommending that people quit sugar altogether to help alleviate symptoms of those conditions.

When some of my followers asked for research to back up these claims, the nutritionists posted links. But the studies they posted didn’t really prove anything.

It’s something I deal with almost on a daily basis: people and companies (especially nutrition MLMs) making nutrition claims about their products or diets, then trying to back those claims up with research that doesn’t establish a meaningful connection between them.

It can be extremely misleading, but that’s an advantage for the seller: using the illusion of ‘research’ to convince us to buy what their product or line of thinking, while trusting that we won’t be able to interpret the studies.

It works. I can’t count how many times I’ve posted a debunk of popular diets and have gotten comments on my post like these:

‘But there’s lots of research!’

‘I’ve done my research’

‘I’ve seen studies!’

Except, just because there’s research on something, doesn’t mean it’s good research. And because I don’t want you to be misled ever again, I’m going to give you a very high-level primer on what to look for in nutrition research.

It can take some time and effort, but if you’re looking to invest in your health, it’s worth it. You probably wouldn’t buy a car without doing research, so why buy into what a random person is telling you to do for your nutrition, without doing the same due diligence?

Research can be complicated, even for me. So, we’re going to keep this simple.

1. Read the entire study, not just the abstract.

You’ve got to read the entire study. The top part, or the abstract, is a summary, but it doesn’t often tell you about study design. This is important stuff.

If you can’t access the entire study, try sci-hub, which jumps paywalls, or get someone who works at a hospital or university to get it for you. There’s even a Facebook group called ‘Ask for PDFs From People with Institutional Access,’ which can get you a PDF of the study you need.

Once you have the whole study, here’s what you need to look for:

2. Correlation doesn’t equal causation.

Most shifty nutrition claims can be weeded out using this rule.

Causation is when there is a definite connection that this one thing actual causes the other thing. Many, many people will make nutrition claims that seem like established causation, but are in fact correlation.

Correlation is when one thing, say, death, appears to be linked to another thing, say, sugar intake. Or, processed meat intake appears to be linked to a greater risk of cancer.

This in no way means that if you eat sugar or processed meats, you’re going to die or get cancer. It means that there may be a link, but in this case, you need to look at confounders.

As in, in people who eat a lot of sugar, what else in their diet or lifestyle may impact their risk of death? Do they also tend to smoke, or lead sedentary lifestyles? Do they eat fewer fruits and vegetables and less fibre than recommended?

A lot of studies control for these things (aka, take them into account and adjust for them), but that’s tough to do. And because you can’t keep entire groups of people (or even a single person) in a controlled environment for an extended period of time, we end up with what amounts to educated guesses about how X food impacts our health. This is common for nutrition research, and it’s just what we need to deal with.

But that doesn’t mean that we can make claims that are or sound definite about stuff we don’t know for sure. You’ll notice that when I write about science, I use a lot of ‘may cause’ or ‘appears to be’ or ‘studies suggest’ language. That’s because I believe in being responsible in terms of communicating what research actually knows, and the limitations around it.

The nutritionists above implied that studies show that people who eat a lot of sugar seem to be depressed, therefore depression is associated with sugar intake (so buy their quit sugar program!)

Not so fast!

We know that people who are depressed tend to eat more sugar – because sugar intake releases dopamine, which makes us feel good. Therefore, it’s more likely that depression leads sugar intake, rather than the other way around. In fact, there is no research that shows that the more sugar you eat, the more depressed you get. Sugar intake may have nothing to do with depression in that way.

See how studies can be manipulated to suit a person’s narrative?

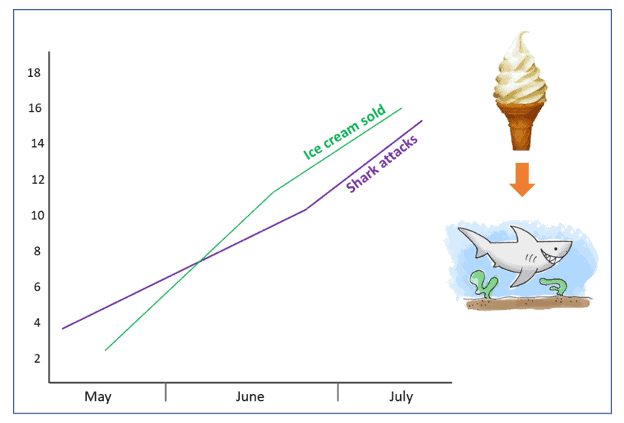

Here’s a great graphic to show you how correlation (ice cream and shark attacks rising in the summer months) doesn’t equal causation (they aren’t linked…at all):

3. Study size, length, and population.

First of all, animal studies can’t be extrapolated to humans. At least, making a claim that a product or ingredient does X for humans, then using a rat study to ‘prove’ it, doesn’t hold water.

We aren’t rodents, and therefore may not react in the same way to the intervention being studied.

You also want to look for the words ‘in-vitro’ and ‘in-vivo.’ In-vitro means ‘in a lab dish,’ which, like animal studies, doesn’t prove that something will have the same effect in humans. In-vivo means ‘in a living subject,’ but make sure that living subject is a human.

You also want to make sure that the sample size is adequate and diverse, which best represents the population here on earth. A study with 10 subjects who are all women between 18 and 25, for example, may not be applicable to people who aren’t in that demographic.

Finally, you want to look at the length of the study. If it was years and years, who’s to say that people didn’t change their diets or habits during that time?

If it was super short, maybe the time frame isn’t realistic to see whether the intervention works or, will continue to work.

4. Ingredients and amounts.

A common tactic of nutrition MLMs is to post studies that appear to prove that their products work. To the layperson, this might look legit, but studies are often about one ingredient in the product, not the product itself.

Take It Works slimming gummies. It Works salespeople are constantly posting that research shows that these gummies ‘burn fat.’ The active ingredient in Slimming Gummies is Morosil, which is derived from blood oranges.

It Works makes the following claims about this product:

Features MOROSIL® Blood Orange extract, clinically proven to shrink waist and hip circumference by inches—even lowering Body Mass Index!†*

Attacks fattening calories that add unwanted inches to your stomach and hips†

Actively shrinks bloated fat cells so you can enjoy a slimmer body†

‘Attacks fattening calories’ – excuse me? Is this the 70s?

There is one study that It Works people are constantly using to lend credibility to their claims about Morosil. But I’m thinking that not many of them read or understood it, because it doesn’t really support Slimming Gummies at all.

First of all, the study looked at Morosil on its own, not Slimming Gummies themselves. The Gummies have other ingredients in them, and as you’ll see below, have a different amount of Morosil in them than what was used in the research.

This is important to know. I see a ton of MLMs using ‘proprietary blends’ that don’t reveal just how much of each ingredient they contain. We can’t compare these ingredients to any available research, because we aren’t sure how the dosage in the blend stacks up to the dosage in the research.

Back to the Morosil research, we know that the study had two groups – one that received a placebo, and one that received 400mg of Morosil. It was double blinded, which is a good thing that you want to look for, because it means that neither group knew if they were getting a placebo or the Morosil.

The group that got the Morosil lost a small amount of weight over the placebo group, but wait!

The placebo group also lowered their BMI, and hip and waist circumference. Everyone lost weight.

And look what’s in the abstract:

As the result of stratified analysis, the remaining 6 subjects, whose BMI < 30 as well as WHR 0.85, showed a significant difference between two groups after 12 weeks in all items of body weight, BMI, waist and hip circumference.

Out of the 30 subjects in the Morosil group, only 6 showed a significant result after 12 weeks. And, those people were not classified as ‘obese’ to begin with.

Aside from that:

The study didn’t control for diet. We have no idea what the subjects were eating.

In the small print, It Works recommends that Slimming Gummies be used along with a low-calorie diet. Is it the diet, or the supplements, that causes weight loss?

The study was sponsored by the company that makes morosil supplements. This is a red flag – research done by companies with a vested interest in the product being studies more often than not turn out in favor of that product.

The dosage that It Works recommends for its Slimming Gummies is 200mg, not the 400mg taken in the study. Even if Morosil was effective, you’d be underdosing with Slimming Gummies.

Aside from all of that, It Works’ claims around these gummies are absurd. The study does not show anything about ‘shrinking bloated fat cells’ or ‘attacking calories.’

And it wouldn’t be right of me not to mention, that if these gummies worked, they’d be regulated, they’d be first line treatment by physicians for weight loss, and the weight loss industry would cease to exist.

Statistical Significance.

We often look at statistical significance (also called p value) as the gold standard of whether an intervention works, but we tend to ignore the effect.

This drug leads to a significant weight loss!

But hold on, because statistical significance may not be that impressive. it means that an intervention has an impact, but as this article outlines, we need to assess what that impact will be – if anything – on the population we’re studying.

I’ve seen nutrition studies in which the effect of a diet intervention (a diet) was statistically significant (the intervention group lost weight), only to read further that that significance was less than a kilogram versus a placebo group. What’s statistically significant isn’t always what we’d consider to be ‘significant’ in daily life.

Look at the whole picture.

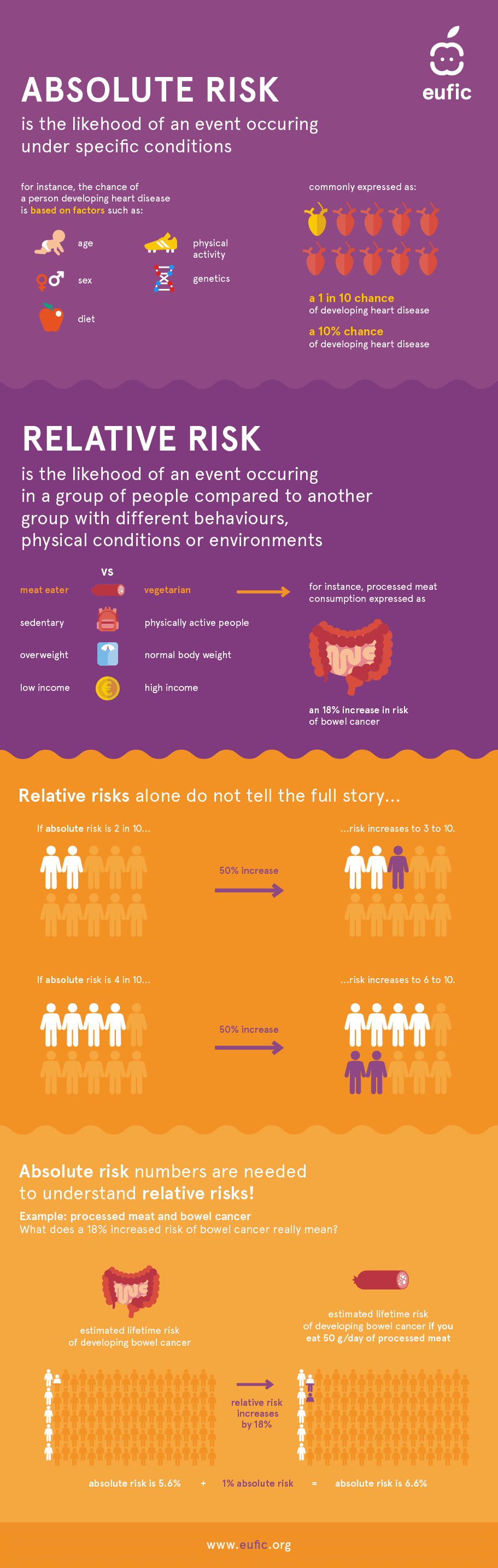

Absolute risk vs relative risk.

I can’t write about nutrition research without talking about absolute versus relative risk.

You know how the media often talking in terms of a food or behavior increasing percentage risk of something bad happening?

They often talk about this risk in relative terms, which makes it seem a lot scarier than it really is. A recent headline was that drinking 3 cups of milk a day increases breast cancer risk by 50%.

I wrote about this with the milk and breast cancer study that came out in 2020.

What?

That sounds a bit off, don’t you think? That’s because they’re talking about relative risk, which is the probability of a health event happening in one group, versus another group.

The absolute risk is the risk of this health event happening to you.

I know, it’s annoyingly complicated to wrap your mind around, so let’s put absolute and relative risk into the milk and breast cancer example.

What you should ask when you see a high percentage of risk in a headline is, ‘compared to what?’

Let’s say the risk of breast cancer overall is 1 in 50 (I made these numbers up).

If 3 cups of milk a day causes a 50% increase in breast cancer risk, that sounds like a lot. But in ABSOLUTE terms, it means that your risk of getting breast cancer goes from 1 in 50, to 1.5 in 50.

Phew!

The study in question had other major flaws, which I discuss in the linked post. But as far as risk levels, you can see how using relative risk in headlines can cause panic…and a lot of clicks.

This absolute vs relative risk graphic by Eucific is one of my faves.

All of this being said, it’s possible that some studies that aren’t perfect in their methodology may hold valuable information, and answers to our health questions. Nutrition research is, like I said before, a major guessing game a lot of the time. We can’t control peoples’ lives for decades to properly assess the effect that diet and lifestyle has on their health.

That’s always important to keep in the back of your mind when reading studies and headlines.

What else should be on your mind: how would this study play out in real life, and not in a controlled or semi-controlled environment? Would normal people be able to sustain the intervention?

Content should reflect the limitations of the research, and not use it like it’s fact when it’s not.

That’s just the responsible way to educate people.

As someone who’s searching for answers, it’s important to keep your mind open, and if something pops up that’s convincing, be ready to change your view. Nutrition evolves all the time, and we need to keep up with new findings.

Nutrition research can be complicated, but if you know the basics, you’ll be able to separate a lot of fiction from fact.